Reactor and Proactor Pattern

Table of Contents

1. Reactor 和 Proactor 模式简介

Reactor 模式和 Proactor 模式在网络编程中经常会用到它们,是服务器端开发的常用设计模式,能提供高性能的 I/O 并发。

Reactor 模式的底层往往使用 select/poll/epoll 等 I/O 复用方式来实现;Proactor 模式的底层使用异步 I/O(如 Windows 中的完成端口或 UNIX 中 aio_*()系列函数)来实现。

参考:

Proactor - An Object Behavioral Pattern for Demultiplexing and Dispatching Handlers for Asynchronous Events (解释 Reactor 和 Proactor 模式最好的文章)

Pattern-Oriented Software Architecture Volume 2, Patterns for Concurrent and Networked Objectt, 3.1 Reactor

Pattern-Oriented Software Architecture Volume 2, Patterns for Concurrent and Networked Objects, 3.2 Proactor

2. Reactor(反应器,又叫 Dispatcher 或 Notifier)

The Reactor architectural pattern allows event-driven applications to demultiplex and dispatch service requests that are delivered to an application from one or more clients.

Reactor 中文译为“反应器”多少会令人困惑,它还有两个别名:Dispatcher(分配器)、Notifier(通知器),这两个别名可能更易理解。

Reactor 模式到底是怎么回事呢?

Reactor 模式究竟是个什么东西呢?这要从事件驱动的开发方式说起。我们知道,对于应用服务器,一个主要规律就是,CPU 的处理速度是要远远快于 I/O 速度的,如果 CPU 为了 I/O 操作(例如从 Socket 读取一段数据)而阻塞显然是不划算的。好一点的方法是分为多进程或者线程去进行处理,但是这样会带来一些进程切换的开销,试想一个进程一个数据读了 500ms,期间进程切换到它 3 次,但是 CPU 却什么都不能干,就这么切换走了,是不是也不划算?

这时先驱们找到了事件驱动,或者叫回调的方式,来完成这件事情。这种方式就是,应用业务向一个中间人注册一个回调(event handler),当 I/O 就绪后,就这个中间人产生一个事件,并通知此 handler 进行处理。

好了,我们现在来看 Reactor 模式。在前面事件驱动的例子里有个问题:我们如何知道 I/O 就绪这个事件,谁来充当这个中间人?Reactor 模式的答案是:由一个不断等待和循环的单独进程(线程)来做这件事,它接受所有 handler 的注册,并负责向操作系统查询 I/O 是否就绪,在就绪后就调用指定 handler 进行处理,这个角色的名字就叫做 Reactor。

上面内容摘自:Netty那点事(四)Netty与Reactor模式

在 ACE 网络编程框架中使用反应器,只需如下几步:

(1) 创建事件处理器,以处理他所感兴趣的某事件。

(2) 在反应器上登记,通知说他有兴趣处理某事件,同时传递他想要用以处理此事件的事件处理器的指针给反应器。

随后反应器框架将自动地:

(1) 在内部维护一些表,将不同的事件类型与事件处理器对象关联起来。

(2) 在用户已登记的某个事件发生时, 反应器发出对处理器中相应方法的回调。

2.1. 反应器优缺点

The Reactor pattern offers the following benefits:

- Separation of concerns

- The Reactor pattern decouples application-independent demultiplexing and dispatching mechanisms from application-specific hook method functionality. The application-independent mechanisms can be designed as reusable components that know how to demultiplex indication events and dispatch the appropriate hook methods defined by event handlers. Conversely, the application-specific functionality in a hook method knows how to perform a particular type of service.

- Modularity, reusability, and configurability

- The pattern decouples event-driven application functionality into several components. For example, connection-oriented services can be decomposed into two components: one for establishing connections and another for receiving and processing data.

- Portability

- UNIX platforms offer two synchronous event demultiplexing functions, select() and poll(), whereas on Win32 platforms the WaitForMultipleObjects() or select() functions can be used to demultiplex events synchronously. By decoupling the reactor's interface from the lowerlevel operating system synchronous event demultiplexing functions used in its implementation, the Reactor pattern therefore enables applications to be ported more readily across platforms.

- Coarse-grained concurrency control

- Reactor pattern implementations serialize the invocation of event handlers at the level of event demultiplexing and dispatching within an application process or thread. This coarse-grained concurrency control can eliminate the need for more complicated synchronization within an application process.

The Reactor pattern can also incur the following liabilities:

- Restricted applicability

- The Reactor pattern can be applied most efficiently if the operating system supports synchronous event demultiplexing on handle sets. If the operating system does not provide this support, however, it is possible to emulate the semantics of the Reactor pattern using multiple threads within the reactor implementation. This is possible, for example, by associating one thread to process each handle.

- Non-pre-emptive

- In a single-threaded application, concrete event handlers that borrow the thread of their reactor can run to completion and prevent the reactor from dispatching other event handlers. In general, therefore, an event handler should not perform long duration operations, such as blocking I/O on an individual handle, because this can block the entire process and impede the reactor's responsiveness to clients connected to other handles.

- Complexity of debugging and testing

- It can be hard to debug applications structured using the Reactor pattern due to its inverted flow of control. In this pattern control oscillates between the framework infrastructure and the method call-backs on application-specific event handlers. The Reactor's inversion of control increases the difficulty of 'single-stepping' through the run-time behavior of a reactive framework within a debugger, because application developers may not understand or have access to the framework code.

参考:Pattern-Oriented Software Architecture Volume 2, Patterns for Concurrent and Networked Objects, 3.1 Reactor

3. Proactor(前摄器)

The Proactor architectural pattern allows event-driven applications to efficiently demultiplex and dispatch service requests triggered by the completion of asynchronous operations.

3.1. 高性能 Web 服务器实例

下面通过一个高性能 Web 服务器实例来说明 Proactor 模式。

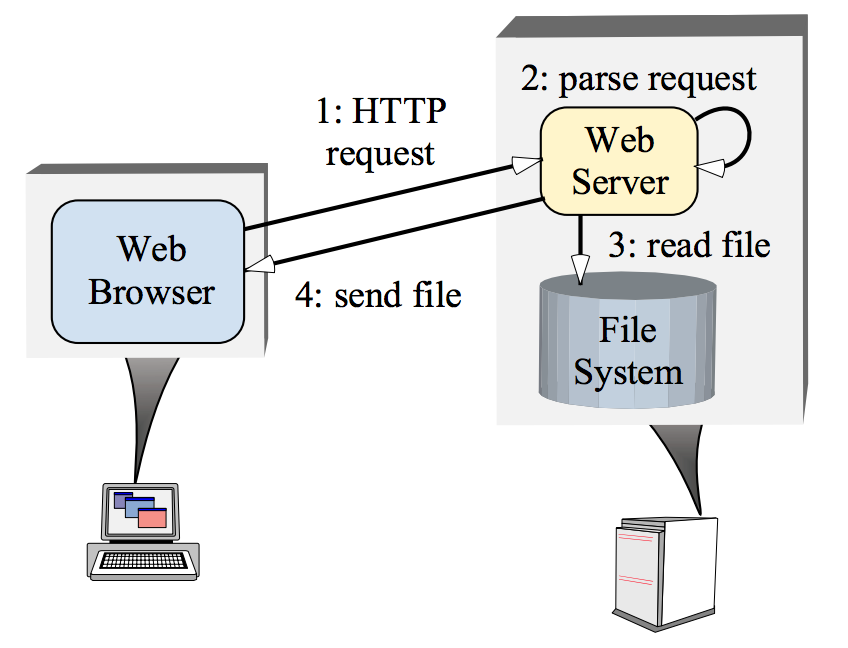

当用户要从指定的 URL 下载内容时,有下面 4 个步骤:

(1) 浏览器与 URL 指定的 Web 服务器建立连接连接,然后向它发送 HTTP GET 请求。

(2) Web 服务器接收浏览器的 CONNECT 指示,接受连接,读取并解析请求。

(3) 服务器打开并读取指定的文件。

(4) 最后,服务器将文件的内容发回给 Web 浏览器,并关闭连接。

上面 4 个基本步骤如图 1 所示。

Figure 1: Typical Web Server Communication Software Architecture

3.1.1. 多线程实现 Web 服务器

首先考虑简单的情况。最直接地实现一个并行 Web 服务器的方法是采用多线程:为每个请求分配一个处理线程。如图 2 所示。

Figure 2: Multi-threaded Web Server Architecture

但是,为每个请求分配一个线程的 Web 服务器有下面这些缺点:

- Threading policy is tightly coupled to the concurrency policy

- This architecture requires a dedicated thread for each connected client. A concurrent application may be better optimized by aligning its threading strategy to available resources (such as the number of CPUs via a Thread Pool) rather than to the number of clients being serviced concurrently.

- Increased synchronization complexity

- Threading can increase the complexity of synchronization mechanisms necessary to serialize access to a server’s shared resources (such as cached files and logging of Web page hits).

- Increased performance overhead

- Threading can perform poorly due to context switching, synchronization, and data movement among CPUs.

- Non-portability

- Threading may not be available on all OS platforms. Moreover, OS platforms differ widely in terms of their support for pre-emptive and non-preemptive threads. Consequently, it is hard to build multi-threaded servers that behave uniformly across OS platforms.

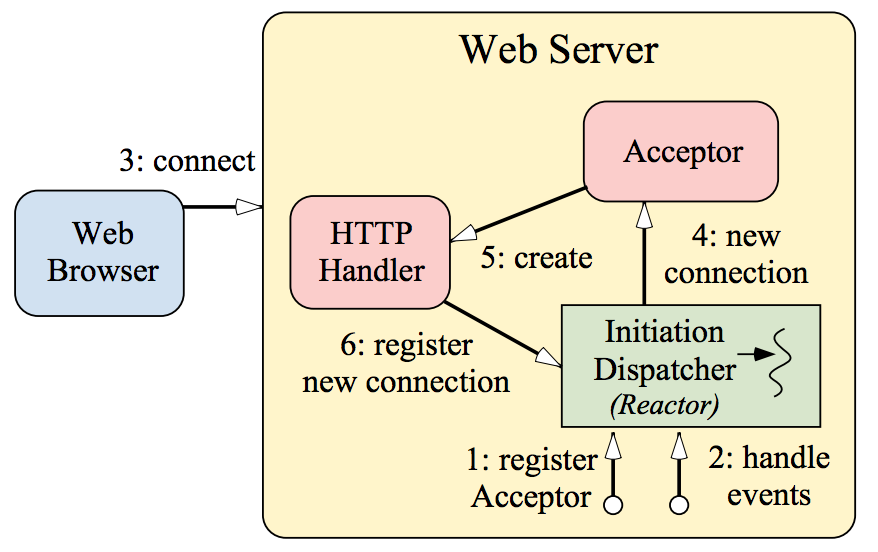

3.1.2. 用 Reactor 模式实现 Web 服务器

为了克服前面多线程 Web 服务器的缺点。我们可以利用 Reactor 模式实现 Web 服务器。如图 3 和 4 所示。

Figure 3: Client Connects to Reactive Web Server

Figure 4: Client Sends HTTP Request to Reactive Web Server

The main advantages of the reactive model are portability, low overhead due to coarse-grained concurrency control (that is, single-threading requires no synchronization or context switching), and modularity via the decoupling of application logic from the dispatching mechanism. However, this approach has the following drawbacks:

- Complex programming

- As seen from the list above, programmers must write complicated logic to make sure that the server does not block while servicing a particular client.

- Lack of OS support for multi-threading

- Most operating systems implement the reactive dispatching model through the select system call. However, select does not allow more than one thread to wait in the event loop on the same descriptor set. This makes the reactive model unsuitable for high-performance applications since it does not utilize hardware parallelism effectively.

- Scheduling of runnable tasks

- In synchronous multithreading architectures that support pre-emptive threads, it is the operating system’s responsibility to schedule and timeslice the runnable threads onto the available CPUs. This scheduling support is not available in reactive architectures since there is only one thread in the application. Therefore, developers of the system must by careful to time-share the thread between all the clients connected to the Web server. This can be accomplished by only performing short duration, non-blocking operations.

3.1.3. 用 Proactor 模式实现 Web 服务器

为了克服前面 Reactor 模式 Web 服务器的缺点。我们可以利用 Proactor 模式实现 Web 服务器。如图 5 和 6 所示。

Figure 5: Client connects to a Proactor-based Web Server

Figure 6: Client Sends requests to a Proactor-based Web Server

我们知道,在 Reactor 模式中,当 I/O 就绪后操作系统通知 Web 服务器,由 Web 服务器调用事件处理程序进行后序处理。而在 Proactor 模式中,当具体的 I/O 操作完成后(如已经复制到内存中了),操作系统才通知 Web 服务器。

3.2. POSIX aio_*() and Windows I/O completion ports

Completion ports in Windows NT is the mechanisms to implement the Proactor pattern efficiently in Windows System. On some real-time POSIX platforms the Proactor pattern is implemented by the aio_*() family of APIs.

3.3. 前摄器优缺点

The Proactor pattern offers the following benefits:

- Separation of concerns

- The Proactor pattern decouples application-independent asynchronous mechanisms from application-specific functionality. The applicationindependent mechanisms become reusable components that know how to demultiplex the completion events associated with asynchronous operations and dispatch the appropriate callback methods defined by concrete completion handlers. Similarly, the application-specific functionality in concrete completion handlers know how to perform particular types of service, such as HTTP processing.

- Portability

- The Proactor pattern improves application portability by allowing its interface to be reused independently of the underlying operating system calls that perform event demultiplexing. For example, on real-time POSIX platforms the asynchronous I/O functions are provided by the aio_*() family of APIs. Similarly, on Windows NT, completion ports and overlapped I/O are used to implement asynchronous I/O.

- Encapsulation of concurrency mechanisms

- A benefit of decoupling the proactor from the asynchronous operation processor is that applications can configure proactors with various concurrency strategies without affecting other application components and services.

- Decoupling of threading from concurrency

- The asynchronous operation processor executes potentially long-duration operations on behalf of initiators. Applications therefore do not need to spawn many threads to increase concurrency. This allows an application to vary its concurrency policy independently of its threading policy. For instance, a Web server may only want to allot one thread per CPU, but may want to service a higher number of clients simultaneously via asynchronous I/O.

- Performance

- Multi-threaded operating systems use context switching to cycle through multiple threads of control. While the time to perform a context switch remains fairly constant, the total time to cycle through a large number of threads can degrade application performance significantly if the operating system switches context to an idle thread. For example, threads may poll the operating system for completion status, which is inefficient. The Proactor pattern can avoid the cost of context switching by activating only those logical threads of control that have events to process. If no GET request is pending, for example, a Web server need not activate an HTTP Handler.

- Simplification of application synchronization

- As long as concrete completion handlers do not spawn additional threads of control, application logic can be written with little or no concern for synchronization issues. Concrete completion handlers can be written as if they existed in a conventional single-threaded environment. For example, a Web server's HTTP handler can access the disk through an asynchronous operation, such as the Windows NT TransmitFile() function , hence no additional threads need to be spawned.

The Proactor pattern has the following liabilities:

- Restricted applicability

- The Proactor pattern can be applied most efficiently if the operating system supports asynchronous operations natively. If the operating system does not provide this support, however, it is possible to emulate the semantics of the Proactor pattern using multiple threads within the proactor implementation. This can be achieved, for example, by allocating a pool of threads to process asynchronous operations. This design is not as efficient as native operating system support, however, because it increases synchronization and context switching overhead without necessarily enhancing application-level parallelism.

- Complexity of programming, debugging and testing

- It is hard to program applications and higher-level system services using asynchrony mechanisms, due to the separation in time and space between operation invocation and completion.

- Scheduling, controlling, and canceling asynchronously running operations

- Initiators may be unable to control the scheduling order in which asynchronous operations are executed by an asynchronous operation processor.

参考:Pattern-Oriented Software Architecture Volume 2, Patterns for Concurrent and Networked Objects, 3.2 Proactor

4. Tips

4.1. 为什么说“Reactor 是同步 I/O,Proactor 是异步 I/O”

Reactor 模式里,操作系统只负责通知 I/O 就绪,具体的 I/O 操作(例如读写)仍然是要在业务进程里阻塞的去做的,而 Proactor 模式则更进一步,由操作系统将 I/O 操作执行好(例如读取,会将数据直接读到内存 buffer 中),而 handler 只负责处理自己的逻辑,真正做到了I/O与程序处理异步执行。所以我们一般又说“Reactor是同步I/O,Proactor是异步I/O”。